We eat with our eyes

In the last post, we touched in the evolution of format, user behavior and user expectations in their process of selecting what to watch. In this part we will walk through how we at Vionlabs are using AI and emotional analysis to automatically create compelling artwork with emotional tags, allowing for highly engaging content discovery and in-depth analysis of what makes a user click a certain poster or preview.

Vionlabs in-house AI has been developed to solve this challenge, focusing on the user interface of today and the future. With a strong focus on the format needed for the new generation of streamers. We understand that content is about storytelling, and we love storytelling, so we kept that in mind as we trained our AI networks to focus on how to learn a human way of engaging with stories in a video format.

Vionlabs preview clips are generated by utilizing Vionlabs unique fingerprint & emotional analysis data (Read more here), generating new assets for the titles, and tagging up the assets with the most dominant emotion as tags example: angry, joyful, sad. These types of tags can be used everywhere, from using the right clip to represent the title’s mood or to personalize the clips and providing each user with the clip that fits them the best to optimize the engagement.

With emotional tags added to the preview clips we can generate preview clips with diversity and different emotions.

The process is as follows:

-

- Extract frame-by-frame information related to changes in a film’s color space to detect jump cuts

-

- Utilize the Vionlabs time-series-based information to detect important segments of the content

-

- Edit the film into clips at the points of these jump cuts and important segment markers

-

- We assign each clip an emotion tag by combining clips with their corresponding VAD data and identifying the dominant emotion over each one

-

- We constrain the list of clips to ones of sufficient length to capture attention (between 0:59 – 2 mins)

-

- We further constrain this to a minimum of 5 minutes past the film’s intro, and (usually) nothing in the last 10% – 30% of the film (to avoid credits and spoilers)

-

- 8-10 previews per asset, depending on the structure of the story

-

- Generating clips for an asset takes about 1-2 minutes in total and can scale horizontally

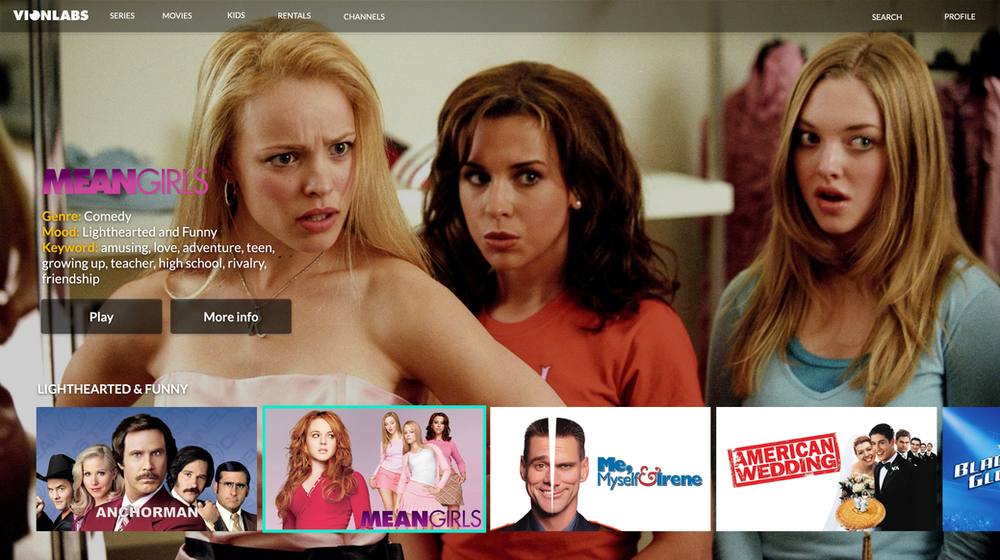

Combining the preview clips with the emotional tags, moods, and video story descriptors you get a really relevant, crisp, and engaging Visual Discovery experience:n

Personalized preview clips combined with Vionlabs Fingerprint Plus to create the essential presentation for getting viewers engaged. Exposing a high-quality personalized clip with predicted mood, genre & descriptive keywords.

How to select previews & thumbnails

Artificial Intelligence and computer vision technology have enabled us to auto-generate previews and thumbnails for titles and add an emotional tag to the clip so you can determine the mood of the clip/thumbnails (Read more). But when you have all that data, what clip or thumbnail should you choose for what title? It is both challenging and time consuming to decide which asset that should represent a title. Therefore we suggest trying one of these two methods for selecting the clip:

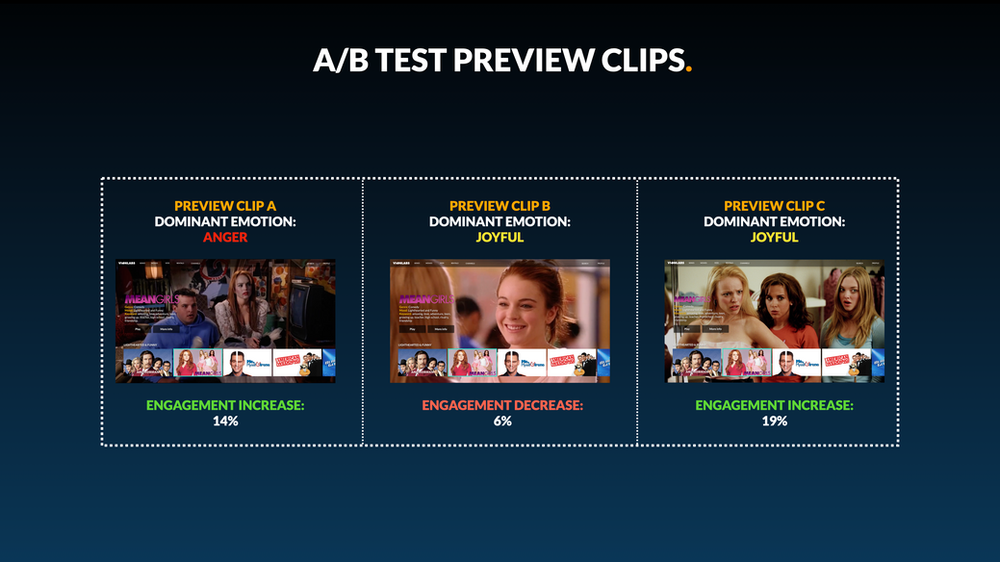

1. A/B test the Preview clips/Thumbnails

and see which one has the best impact on your viewers, and from there, decide what thumbnail or clip should represent each title.

Use A/B test to identify which clip is generating the highest click-through rate, and then automatically use this clip as representation for a certain period of time. You should repeat this process periodically as user behavior and preferences change over time.

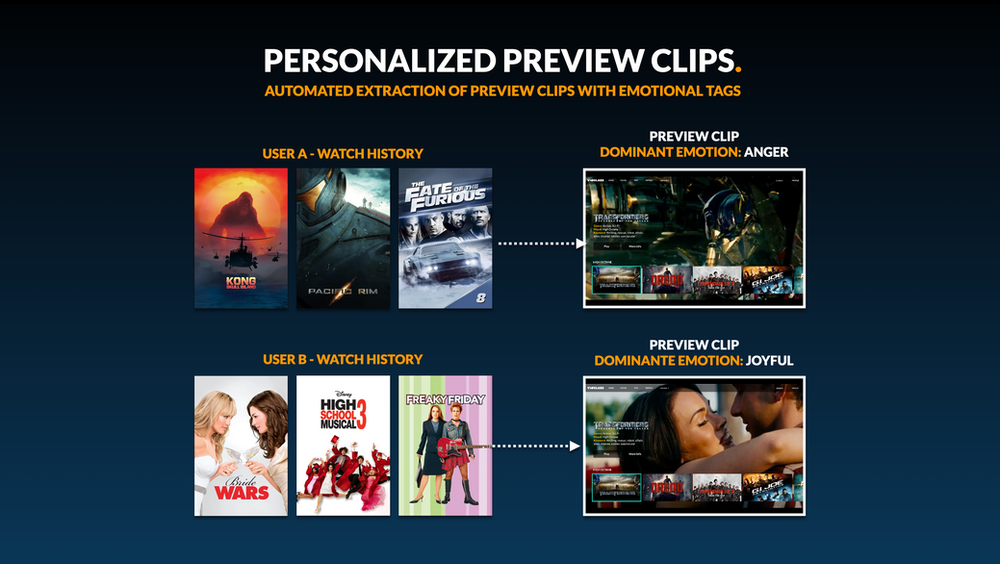

2. Personalize Preview clips/Thumbnails

User A gets the more angry clip and user B gets the more joyful clip.

Or you can choose to personalize the clips and thumbnails for each user based on their watch history. Choose the clips that have a more colorful and joyful mood for comedy lovers, and go for the darker clips with an angry mood for your action/thriller lovers.

Conclusion – Streamers expect more

The user’s expectations on what information should be available for engaging with an asset have changed and as we mentioned in part 1. Nowadays, there is almost a bigger chance of intimidating a user with too much information, scaring the user away instead of bringing them in. Relevance, “crispness” and diversity are at the heart of engaging today’s streamers. In just a few seconds, we need to accurately represent the content in such a way that the user can make a well-informed decision with minimum effort, not overwhelm them with irrelevant information.