Content Intelligence APIs

Integrate scene-level AI directly into your platform. From metadata enrichment and emotional analysis to dynamic thumbnails, clips, and ad-break intelligence, every API is built to plug into your existing workflows.

How It Works

1

Send Your Content

Point us to your assets via URL or cloud storage. Vionlabs ingests and processes video, audio, and image content at scale.

2

AI Analyzes Every Scene

Our models extract mood, emotion, narrative structure, characters, and visual features at the scene level, creating a complete content fingerprint.

3

Get Structured Intelligence

Receive rich, structured JSON payloads ready for your recommendation engine, CMS, ad server, or player. No proprietary formats.

Your Content, Fully Understood

Everything our AI knows about mood, story, characters, and emotion, delivered as structured metadata through one integration point.

Fingerprint+ API

Similar Titles API

Content Summary API

Emotions API

JIT Thumbnails API

JIT Clips API

Markers API

Contextual Ad-Break API

Content Safety API

Explore the API DocsThumbnails

Preview Clips

Smart Lists

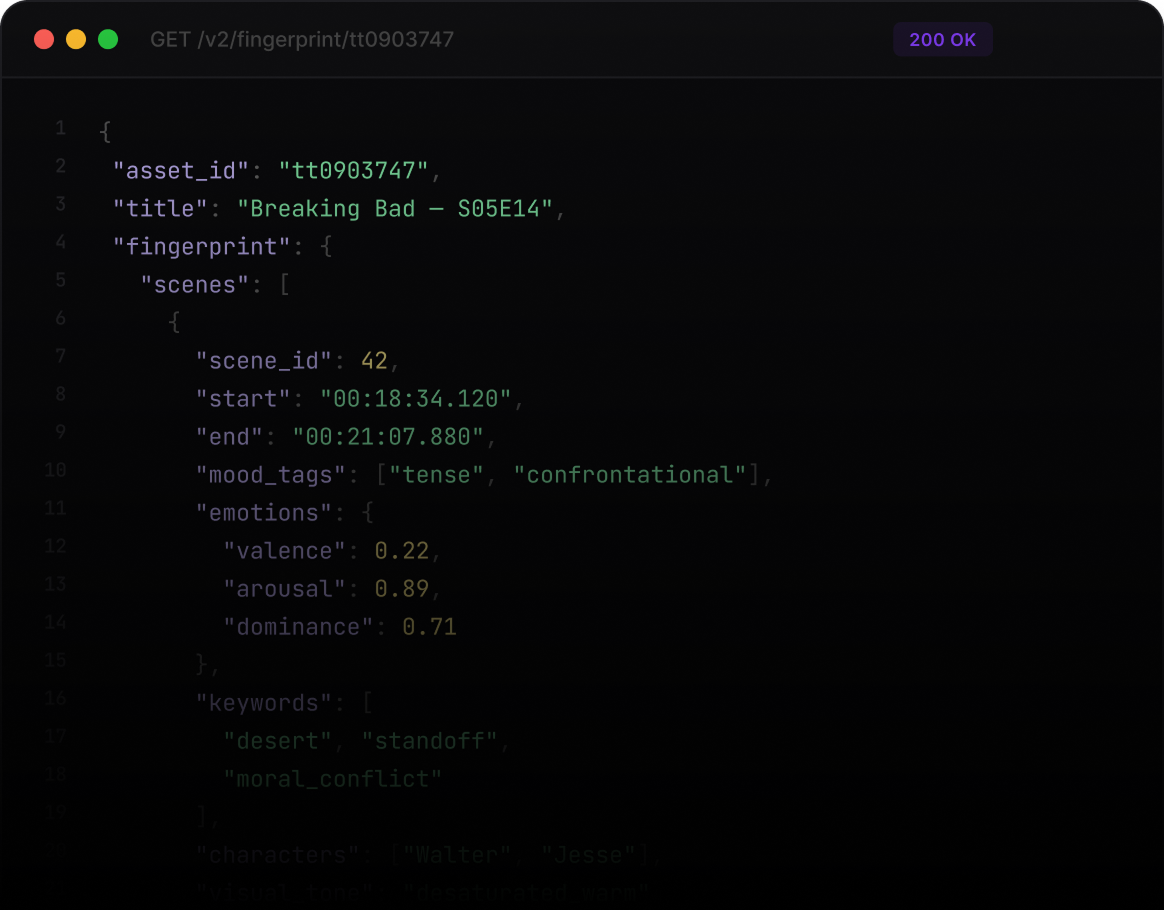

Deep Scene-Level Content Understanding

Fingerprint+ transforms video into structured, machine-readable intelligence.

By analyzing visual signals, dialogue, audio cues, and narrative context across every scene, the API generates semantic fingerprints and rich metadata that describe the content beyond traditional editorial tagging.

This includes content embeddings, mood signals, genres, keywords, language detection, and automatically generated multilingual synopses.

By analyzing visual signals, dialogue, audio cues, and narrative context across every scene, the API generates semantic fingerprints and rich metadata that describe the content beyond traditional editorial tagging.

This includes content embeddings, mood signals, genres, keywords, language detection, and automatically generated multilingual synopses.

Features

Content Fingerprint Embeddings Vector representations capturing the semantic structure of a title for similarity search and recommendation

Mood & Emotion Signals Quantified mood indicators and descriptive mood tags derived from scene dynamics

Genres & Keywords Automatically extracted thematic and narrative descriptors

Language Detection Identification of the primary spoken language

AI-Generated Synopses Short and long summaries generated automatically in multiple languages

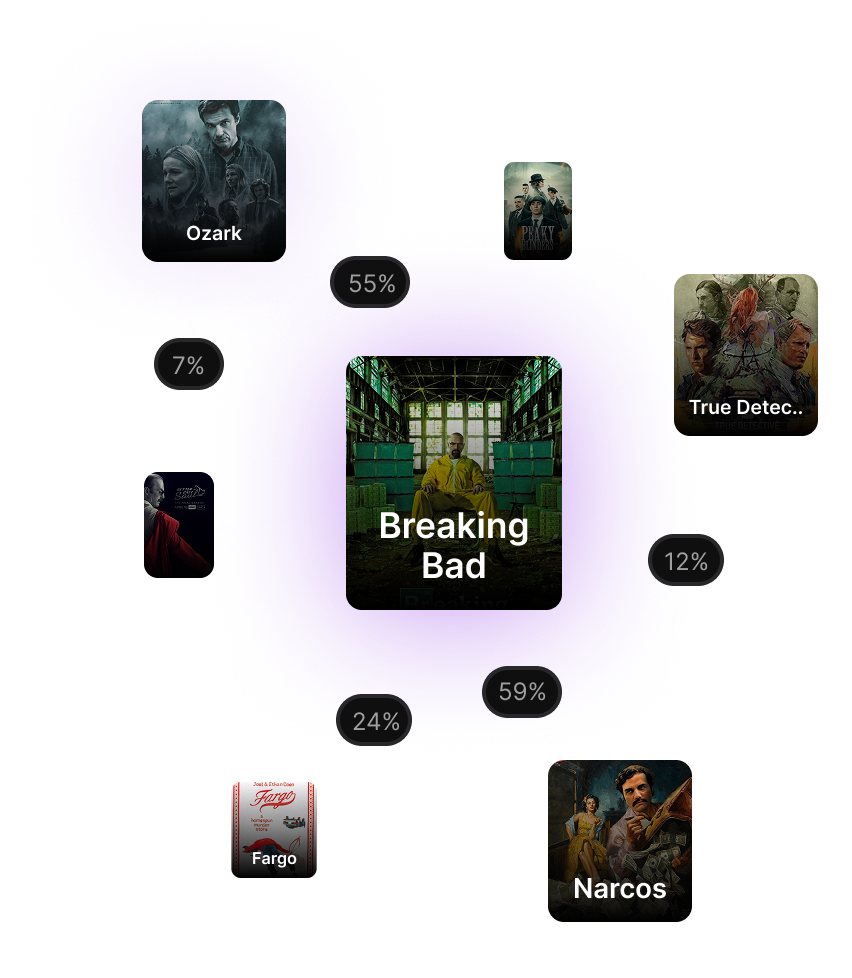

Smart Lists

Transform Titles into Connected Experiences

Similarity intelligence converts isolated titles into dynamic networks of related content.

Instead of static “related items,” platforms gain adaptive, behavior-driven content relationships.

Instead of static “related items,” platforms gain adaptive, behavior-driven content relationships.

Smart Lists

Automated Understanding of Information-Heavy Content

Content summaries provide concise, structured insights for complex or information-dense assets.

Features

Summary Concise, AI-generated description capturing the narrative essence of the asset

Keywords Semantic signals designed to enhance discovery, search, and navigation

Structured Sections Enhanced accessibility and navigation

Ad-Breaks

Binge Markers

Understand Emotional Dynamics Across Content

Emotion intelligence reveals how content evolves across time, scenes, and narrative structures.

Features

Temporal Emotion Mapping Continuous emotional signals across the full content timeline

Emotion Probability Signals Probabilistic detection per segment with intensity scoring

Structured Emotional DimensionsQuantitative modeling via valence, arousal, and dominance

Scene-Level PrecisionEmotion intelligence aligned with temporal boundaries for playback workflows

Thumbnails

Dynamic Thumbnail Generation

Thumbnails are among the most powerful decision triggers in streaming interfaces.

The JIT Thumbnails API enables platforms to generate thumbnails dynamically based on scene-level AI analysis rather than relying on static artwork or manual frame selection.

Thumbnails can be tailored to reflect specific moments, characters, moods, and visual presentation requirements.

The JIT Thumbnails API enables platforms to generate thumbnails dynamically based on scene-level AI analysis rather than relying on static artwork or manual frame selection.

Thumbnails can be tailored to reflect specific moments, characters, moods, and visual presentation requirements.

Features

Scene-Aware Frame Selection Thumbnails from visually and narratively relevant moments

Character-Driven Visuals Emphasize specific characters or interactions

Composition & Framing ControlAdapts to layout needs including positioning and close-ups

Visual Quality OptimizationFrames evaluated for clarity, brightness, and stability

Safety & Suitability ConstraintsRespects brand and content-safety requirements

Vertical & Cross-Platform FormatsOptimized for horizontal, vertical, and mobile-first

Integrated Branding SupportOptional logo insertion for consistent brand presentation

Clips

Dynamic Clip Generation & Intelligence

Short-form video assets have become a core driver of discovery, engagement, and monetization across modern streaming and mobile-first environments.

The JIT Clips API transforms clip creation from a manual editorial task into an intelligent, AI-driven system.

Instead of manually browsing timelines and cutting scenes, platforms can dynamically generate clips aligned with narrative structure, emotional tone, character presence, visual quality, and content suitability requirements.

Clips evolve from simple excerpts into decision-ready assets enriched with contextual intelligence.

The JIT Clips API transforms clip creation from a manual editorial task into an intelligent, AI-driven system.

Instead of manually browsing timelines and cutting scenes, platforms can dynamically generate clips aligned with narrative structure, emotional tone, character presence, visual quality, and content suitability requirements.

Clips evolve from simple excerpts into decision-ready assets enriched with contextual intelligence.

Content-Aware Selection Clips from narratively and visually relevant moments

Semantic Clip Discovery Free-form search to find clips by mood, theme, or situation

Narrative Boundary AlignmentRespects scene transitions and speech dynamics

Character-Driven SelectionEmphasize specific characters or interactions

Editorial & Brand ControlAligns with safety policies and creative constraints

Vertical & Cross-Platform FormatsAdapts to horizontal, vertical, and mobile-first

Balanced Clip DistributionConsistent representation across the content timelinene

Precision Clip Boundaries Clips are delivered with exact temporal and frame-level definitions

Relevance & Ranking Signals Each clip includes contextual scoring for prioritization and optimization

Semantic & Discovery ContextGenerated assets retain the logic behind clip selection

Character & Scene IntelligenceStructured signals describing visible entities and scene composition

Safety & Suitability SignalsAutomated classification indicators supporting brand-safe (profanity / nudity) workflows

Emotional DynamicsClips enriched with viewer-relevant emotional dimensions

Structured Metadata DescriptorsMood, themes, environments, and keywords embedded within each asset

Multi-Asset DeliveryProduction-ready clips accompanied by associated preview imagery

Intro, Recap & Credit Detection

Playback Structural Intelligence

Viewer experience is shaped not only by content, but by how seamlessly playback adapts to narrative structure.

The Markers API automatically detects structural content segments such as intros, recaps, previews, and end credits with high temporal precision.

Instead of relying on manual tagging or editorial workflows, platforms gain a scalable intelligence layer that enables frictionless playback experiences and binge-optimized viewing flows.

The Markers API automatically detects structural content segments such as intros, recaps, previews, and end credits with high temporal precision.

Instead of relying on manual tagging or editorial workflows, platforms gain a scalable intelligence layer that enables frictionless playback experiences and binge-optimized viewing flows.

Features

Automated Structural Detection Identifies intros, recaps, previews, and credits automatically

Precise Segment Boundaries Accurate start/end positions for playback control

Playback-Ready MarkersImmediate integration into skip and navigation workflows

Version-Aware ConsistencyPredictable results across catalog updates

Catalog-Scale AutomationStructural intelligence across entire libraries

Ad-Breaks

Contextual Advertising

Narrative & Context-Aware Monetization

Advertising effectiveness is shaped not only by placement, but by contextual relevance and viewer perception.

The Contextual Ad-Break API identifies advertising opportunities by combining scene-level structure, emotional dynamics, semantic understanding, and content suitability signals.

Instead of treating ad breaks as purely temporal events, placements become narrative-aware and context-driven.

The Contextual Ad-Break API identifies advertising opportunities by combining scene-level structure, emotional dynamics, semantic understanding, and content suitability signals.

Instead of treating ad breaks as purely temporal events, placements become narrative-aware and context-driven.

Features

Narrative-Aware Placement Aligns with storytelling rhythm and scene dynamics

Semantic Context Intelligence Each candidate enriched with scene themes and environments

Mood & Emotional SignalsPlacements reflect emotional tone and narrative intensity

Confidence-Ranked OpportunitiesMulti-model agreement prioritization

Brand & Safety AwarenessContent suitability for advertiser-safe workflows

IAB Taxonomy AlignmentStructured classification for content-aware advertising

Frame-Level PrecisionHigh temporal and frame accuracy for seamless integration

Ad-Breaks

Contextual Advertising

Automated Detection of Sensitive Content

The Content Safety API automatically detects sensitive or potentially inappropriate content in video assets.

By analyzing dialogue, audio signals, and visual frames, the API identifies profanity in speech and nudity in visuals, enabling platforms to enforce editorial guidelines, parental controls, and brand safety policies at scale.

The API returns precise timestamps and confidence scores, allowing systems to automatically flag, filter, or restrict content segments.

By analyzing dialogue, audio signals, and visual frames, the API identifies profanity in speech and nudity in visuals, enabling platforms to enforce editorial guidelines, parental controls, and brand safety policies at scale.

The API returns precise timestamps and confidence scores, allowing systems to automatically flag, filter, or restrict content segments.

Features

Profanity Detection

Identification of profanity within spoken dialogue

Timestamped segments where profanity occurs

Severity scores indicating the intensity of language

Asset-level classification labels

Nudity Detection

Frame-level detection of nudity in visual content

Timestamped frame positions

Confidence scores for detected visual signals

Safe / unsafe classification labels

Built for Developers

Clean RESTful APIs. Structured JSON responses. Comprehensive documentation. Everything your engineering team needs to integrate content intelligence into your stack.

Comprehensive API documentation with sample code

Webhook support for async processing

Sandbox environment for testing

99+ language support built in

Works With Your Stack

Vionlabs APIs integrate with the platforms and tools your team already uses. Available on Google Cloud Marketplace and AWS Marketplace.

Google Cloud Marketplace

Some short description for what it available

AWS Marketplace

Some short description for what it available

What You Can Build

Explore how Vionlabs transforms media

Book a demo to see Vionlabs’ AI in action - rich metadata, engaging artwork, and automation at scale that increase efficiency - so your team can focus on what matters.

In the 30-minute call, we will:

Understand your current goals and objectives

Discover how Vionlabs can increase engagement and efficiency