With the release of AINAR V5, we’re taking a monumental leap forward, building on the successes of AINAR V4 and introducing a suite of groundbreaking features that is the future of content discovery with cognitive AI technology.

AINAR V5: The Future of Content Discovery is Conversational

With the unveiling of AINAR V5, we’re not merely presenting an upgrade; we’re introducing a paradigm shift in content discovery and user interaction. We’re thrilled to announce our pioneering new feature: AINAR Interact – Vision to text translation. This innovation brings natural-prompted interactivity to AINAR, set to redefine the way viewers of any streaming platform interact with content. The emphasis is on two-way communication between the platform and its users, ensuring a more intuitive, personalized, and efficient content discovery experience. AINAR Interact won the prestigious Best of Show Awards at IBC 2023.

With AINAR Interact, users are invited into a dynamic world where their streaming platform listens, understands, and responds. The future of content discovery is conversational, personal, intuitive, and emotionally resonant. Additionally, V5 introduces features like contextual scene data mapping to IAB standards, advanced AI-shorts generator with emotion prompting, and a scene boundary detector with ranking functionality for optimized ad-insertion.

Significant Uplift to Descriptor Model

One of the standout features of AINAR V5 is its enhanced descriptor model. Now capable of generating over 700 mood tags and a staggering 2300 time coded keywords, V5 offers a significant improvement from the 70 mood tags and 1500 keywords of its predecessor, AINAR V4. This uplift ensures a more detailed and nuanced understanding of content, catering to a broader range of emotions and themes.

Think of the descriptor model as your content’s personal storyteller. It’s an advanced algorithm that watches and listens to audio and video content, extracting meaningful insights that go far beyond simple categorization This model does the heavy lifting, generating over 700 mood tags and 2300 keywords for each piece of content it analyzes.

Simply put, the descriptor model is a content curator on steroids.

Here’s how this cutting-edge technology can be used to elevate any platform’s performance:

- Hyper-Personalized Recommendations: Imagine offering your users recommendations that match their mood, theme, and preferences with pinpoint accuracy. Our descriptor model analyzes over 700 mood tags and 2300 keywords, ensuring every user interaction is a perfect match.

- Precision in Content Search: With the power of our descriptor model, users can search for content using specific keywords or moods, transforming the search experience

- Personalized User Experiences: With our model, it is possible to design personalized user journeys, offering collections and playlists that resonate with each individual user. Whether it’s heartwarming dramas or crappy horror with sharks, we’ve got you covered.

- Enhanced Content Discovery: A platform isn’t just about serving content; it’s about delivering exceptional content discovery. Our model uncovers the hidden gems within content libraries providing users with unique and engaging options they might have missed otherwise.

The descriptor model in AINAR V5 is a secret weapon designed to skyrocket user engagement and drive content personalization to the next level for any platform.

While AINAR V4 was a significant advancement in cognitive AI technology, V5 takes it a step further. V5 boasts over 700 mood tags and 2300 keywords, a substantial leap from V4’s 70 mood tags and 1500 keywords.

Time-coded Descriptors and Mood-tags

We all know that in content discovery precision is key, thats why we developed time-coded descriptors and mood-tags. This feature empowers editorial teams to dive deep into each scene’s nuances. Whether curating content that showcases exhilarating car chases, heartfelt romantic moments, or adrenaline-packed sequences, AINARV5 streamlines the process. With just a simple query, editorial teams can swiftly identify and select scenes that resonate with their target audience. This granular approach ensures that content curation is not only efficient but also tailored to the diverse tastes of viewers. For editorial teams, it’s a game-changer, offering a refined toolset to craft compelling content narratives.

AINAR Contextual Advertising- Revolutioning Advertising With Scene Level Precision

AINAR V5 introduces contextual scene data that aligns with the Interactive Advertising Bureau (IAB) standards. This ensures that ads are not only contextually relevant but also adhere to IAB’s categories, optimizing ad campaigns for maximum ROI. The battle for viewer attention has never been fiercer and the age-old challenge remains: how to seamlessly integrate advertisements without disrupting the viewer’s experience.We have created AINAR Contextual Advertising, the solution that’s set to redefine the advertising landscape. But it’s not just about placing ads; it’s about placing them right. With scene-level precision, AINAR ensures that commercials aren’t awkwardly wedged into gripping scenes or pivotal dialogues. Instead, ads are seamlessly woven at natural junctures in the narrative, ensuring a harmonious viewing experience. AINAR Contextual Advertising isn’t just another ad tool; it’s a paradigm shift. By intertwining deep learning with the art of storytelling, it promises advertisers unparalleled precision and viewers an unbroken narrative experience.

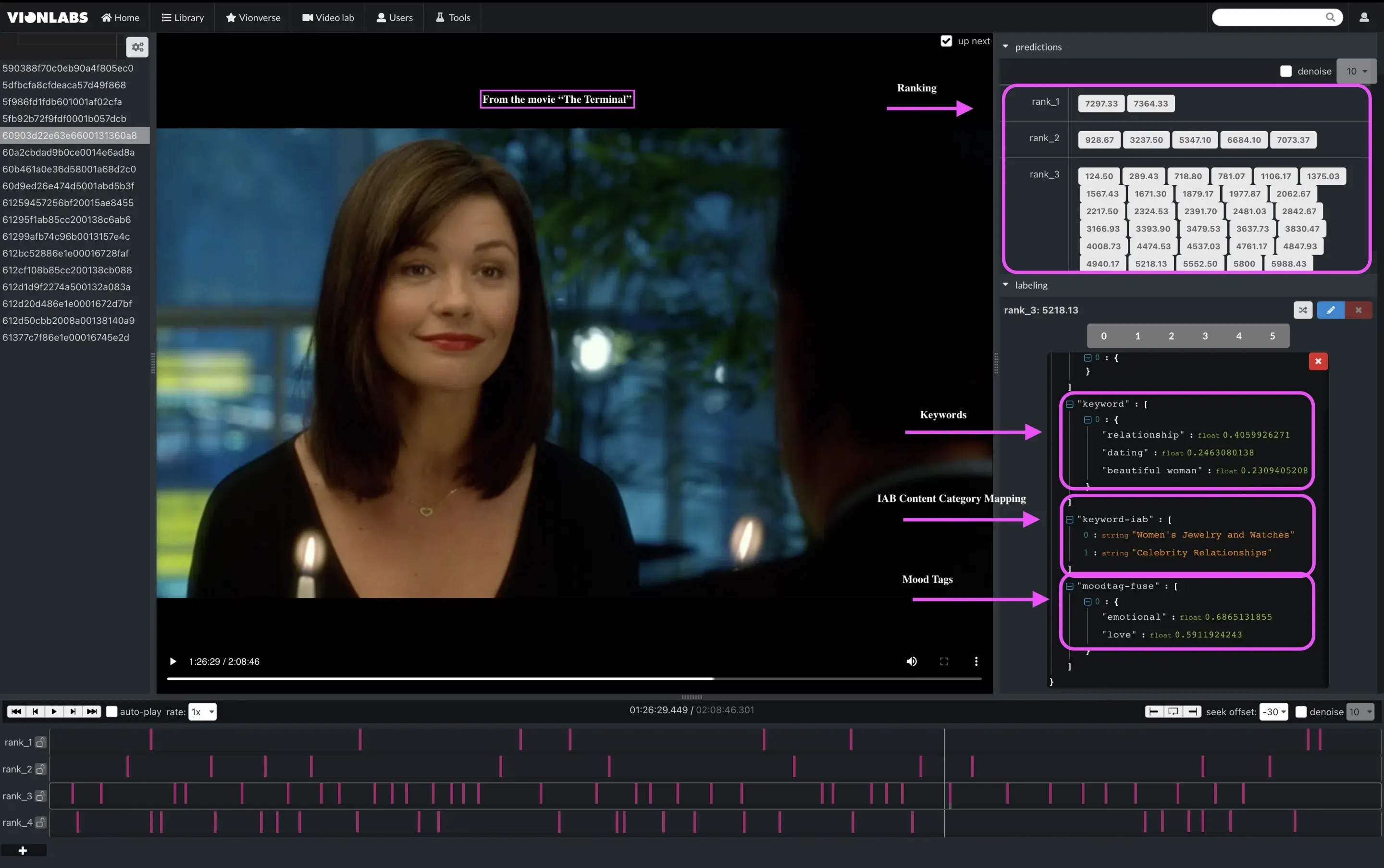

Scene Boundary Detector with Ranking Functionality

AINAR V5’s scene boundary detector is a game-changer for ad-insertion. By ranking the most appropriate moments for ad breaks, it ensures seamless integration of ads into the content, enhancing user experience and ad effectiveness.

The Ranking Structure Explained:

AINAR V5 employs four parallel networks to predict and rank ad breaks:

- Network 1: Black frame detector

- Network 2: Deep learning network analyzing the story’s flow

- Network 3: Scene boundary detector

- Network 4: Shot-similarity algorithm

These networks collaborate to create a ranking system:

- Rank 1: Requires full consensus from all networks, indicating the highest quality ad.

- Rank 2: Requires agreement from at least three networks.

- Rank 3: Requires agreement from at least two networks.

- Rank 4 (Gap Filler): Relies solely on the shot similarity network, filling gaps when higher-ranked ad breaks are scarce.

Furthermore, post-processing filters for speech ensure that ads don’t interrupt crucial discussions or narrations, preserving the integrity of the content.

This image is from a scene in The Terminal, to the right you can see the ranking of the ad breaks and the time coded keywords and mood tags and the IAB Content Category Mapping

Advanced AI-shorts generator with emotion prompting

AINAR Generated Shorts is a state-of-the-art AI that listens and looks at video content and finds the key moments to automatically produce engaging short clips. AINAR Shorts helps streaming companies improve their content discovery experience. AINAR Shorts selects the most relevant clips while also optimizing for scene boundaries, overlaps, diversity, and mood. AINAR Shorts can be used with prompts by editorial teams to generate more refined and user-specific content. These prompts can dictate specific themes or parameters, such as focusing on certain characters or maintaining a particular energy level, allowing for a more tailored user experience. AINAR Shorts can be used with prompts by editorial teams to generate more refined and user-specific content.

AINAR V5 is not just an upgrade; it’s a revolution in cognitive AI technology. As datasets grow and AI research advances, AINAR continues to evolve, promising even more capabilities in future releases.

Update: There is now a new release: AINAR Version 6, read more here.

FAQ for AINAR V5: The Future of Content Discovery

1. What is AINAR V5 and how does it differ from AINAR V4? AINAR V5 is the latest release in the AINAR series, introducing groundbreaking enhancements in cognitive AI technology for content discovery. Unlike its predecessor, AINAR V4, the V5 version boasts over 700 mood tags and 2300 time-coded keywords, improving the granularity and accuracy of content recommendations and search functionalities.

2. What is AINAR Interact and how does it change user interaction with streaming platforms? AINAR Interact is a new feature in AINAR V5 that introduces vision-to-text translation, enabling a more conversational and interactive content discovery experience. This feature allows the streaming platform to engage in two-way communication with its users, making the process of finding content more intuitive and personalized.

3. How does the advanced AI-shorts generator with emotion prompting work in AINAR V5? The advanced AI-shorts generator in AINAR V5 utilizes AI to analyze video content and identify key moments to produce engaging short clips. This tool can be prompted with specific themes or emotional cues, allowing content providers to create tailored clips that resonate with the desired audience’s mood and preferences.

4. Explain the scene boundary detector with ranking functionality introduced in AINAR V5? The scene boundary detector in AINAR V5 identifies optimal moments for inserting ads without disrupting the viewer’s experience. It uses four parallel networks to analyze content and rank possible ad insertion points based on criteria like story flow and scene boundaries, ensuring ads are placed at the most appropriate times during content playback.

5. What is AINAR Contextual Advertising and how does it benefit advertisers and viewers? AINAR Contextual Advertising leverages scene-level data that conforms to Interactive Advertising Bureau (IAB) standards to place ads with precision within the content. This feature ensures that advertisements are contextually relevant and seamlessly integrated into the viewing experience, thereby enhancing viewer engagement and maximizing advertising ROI.

Want to know more?